There was this Online Desktop Question which a few of the GNOME Foundation members expressed surprise (and dismay) at. Bryan does a nice summary of it. “Though the question is about the Online Desktop, in my mind it’s really about a shift in direction and technology and really tests to see that Board members are open to those shifts. It’s the responsibility of the Board members to understand the new technologies and try to enable the people working in that direction where reasonable and prudent for the Foundation to do so.“

Monthly Archives: November 2007

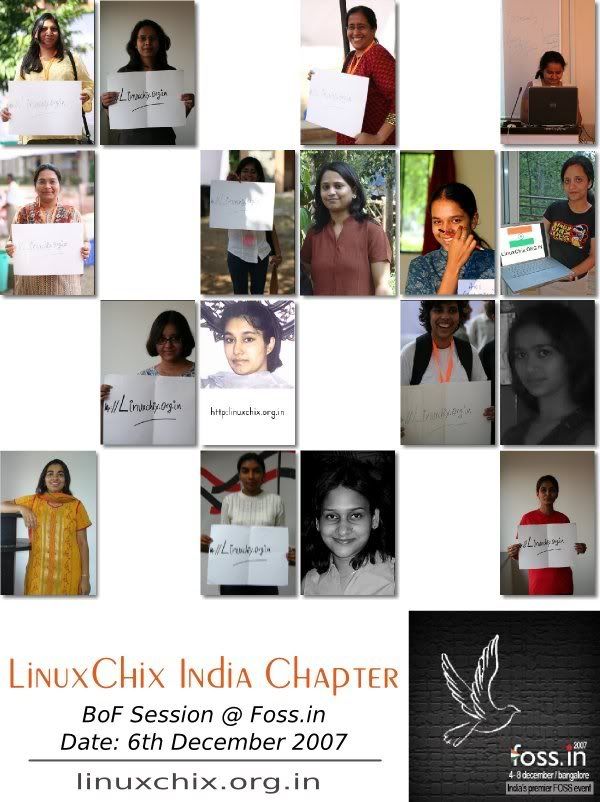

Poster coolness

As linked to by Runa

Who is he ?

That little green thing…

The OLPC effort in India would be going through a sufficiently critical phase. While a large chunk of work is being done at the backend in getting Indic rendering, printing etc fixed on the browser and applications, getting the physical keyboard layouts in place – the focus would now be required to be put on to how to get buy-in for the product. There has been a by now controversial buy-in of the concept, the next obvious stage would be to figuring out a “GoToMarket” strategy for the project.

Such a strategy would include the details that allow potential pilot sites to assess where and how they stand vis-a-vis requesting a pilot, a set of codified preliminary requirements for a pilot and a set of objectives or lessons_to_be_gleaned from the pilot. This would translate into having a means to actually have the hardware on the ground. For developers, an emulation environment or (a single unit) serves well as the platform. The platform for a pilot is the hardware itself. A mashup of the hardware with appropriate content would enable the India team to assess and quantify what they want to achieve with the project and where they stand with the current roadmap. Right now there are a large number of queries happening about “How do we pitch in” but barring G1G1 there’s no real input or map towards getting the hardware in place. In fact, there is not much in terms of how to replicate Karjat in various other suitable places.

The other jarring note is the explicit lack of community around the OLPC in India. Conversely, there seems to be a growing sense of attachment to the Asus driven hardware. When I say community it is not the community limited only to developers. From what I read and here there are a few developers who are actively contributing to it. A community in this case would be the target audience, the potential audience, the potential contributors and the mavens who find more uses for the program by pushing the envelope. A small but not unimportant bit of the task of Community Engineering involves putting in place the infrastructure that allows inclusive participation. One of them is the Pootle deployment for translators which is an awesome thing to happen. At this stage of the program in India, a larger part is played by evangelising the Project. Till date, the negative PR that has happened has fortunately been limited to a small set of press notes. A coherent and cohesive effort at Community Relations through various media means available would actually allow more folks to “get the message”. There has been some thought around this for sure,

OLPC cannot survive without a strong community. From where I sit, OLPC engineering is in a classic trap right now -- all work on production, but almost no work on capacity. Community building is capacity building; that's why it's so important.

The reason the “capacity” bit is important over here is because without it the OLPC would only remain as a marvel of what a concerted effort at innovation can achieve in the field of computing technology. And if that is the only reason for OLPC to exist, then it is a sad one indeed. Perhaps it is more of a “vision” thing. Figuring out the intersect between the EEE and the Classmate and the OLPC (in terms of OLPC software) may just be a way forward. Would it be a good thing to have installable LiveMedia with Sugar being available so as to get folks to test-drive and if happy install an OLPC interface on their conventional desktops ? Would that increase the disconnect between the target users and developers ? Is that one of the possible ways ?

GlimmerMan

Someone I know had a very interesting thing to say. We sure do live in interesting times …

And thus begins the debate…

It promises to be an interesting debate and has the potential of becoming a fantastic Board. Thanks Bruno for posting the questions.

The “cost” of software testing

A traditional software development model defines software quality assurance as the “set of systematic activities providing evidence of the ability of the software process to produce a software product that is fit to use” [G Gordon Schulmeyer and James I McManus, Handbook of Software Quality Assurance]. Such systematic activities have been extensively researched, fine-tuned and implemented through various models. The classic statement when it comes to Open Source projects is that “Given enough eyeballs, all bugs are shallow“. The accepted implication in that statement is that the software will not be free of defects at General Availability and mandates community participation, collaboration and feedback in improving the software.

From “A Survey on Quality Related Activities in Open Source” (which is available from the ACM SIGSOFT as Software Engineering Notes vol 25 no 3) by Luyin Zhao and Sebastian Elbaum, the conclusions or lessons learned are interesting:

* the average application spends approximately 40% of its lifecycle in the testing stage. However, 80% do not have a test plan.

* testing efforts are limited before release because open source people tend to rely on other people to look for the defects in their products. However, not all the code developed under the open source model has been revised by a large number of qualified reviewers

* 28% of defects are on interfaces. The dynamic nature of open source and the close interaction between developers and users might be a factor in this defect distribution

* application attributes have a greate impact on quality assurance activities. They must be taken into account when performing quality assurance tasks

* inspections are more popular within larger systems

* there are not enough testing tools available for web applications

Jeffrey Jaffe writes about bringing more applications to linux and earlier about the ISV ecosystem but his thoughts seem to be more in terms of a lowering of barriers for the ISVs through judicious usage of standards. That among other things would be some of the relevant points raised at the Software Testing BoF at foss.in.

An objective of testing software is to ensure that the GA product is reasonably defect free. That said, the objective of a “tested and certified” software should be to provide an assurance to the end user who is planning to deploy the application on an operating system (and perhaps middleware) for production operations. Such assurance includes assurances of stability of the “stack” and quality. Not surprisingly, the notion of quality would be rooted in the economics of operations of the end user of the application. “Quality is a complex and multifaceted concept” (D A Garvin, What does “product quality” really mean? MIT Sloan Management Review, 26(1):25-43,1984) and hence it can only be perceived as a combination of the various properties of the product on the economics of the product (and hence the company doing the software project that produces the product). For the end user, one of the concern areas around the economics of “certified” applications is the “cost of maintenance effort” ie the cost aligned with maintaining the deployment of the application as related to costs incurred due to downtime of the system due the application. The IEEE Standard Glossary of Software Engineering Terminology defines maintainability as “the ease with which a software system or a component can be modified to correct faults, improve performance, or other attributes, or adapt to a changed environment“.

Certification of a software is aligned with the stated quality goals of the company that produces the software and the company that tests and certifies it (sometimes they are the same company) and thus it has an economic currency attached to it. A simplistic notion of “producing certifiable software” would be to “develop software according to standards”. While standards by themselves are reference points around which development can be undertaken, not all standards can actually be converted into implementation ready tools/widgets/applications. For example, while it might be reasonably easy to test an application against something like LSB, integrating LSB framework during the software development process to ensure that the developed software is “certification ready” would be a bit difficult. Current models of certification and testing of applications are reactive – they test once the software is ready and GA-ed. Software development (irrespective of traditional or open source) models are on the other hand iterative through phases. The need would be to put in place guidance tools based around the standards which can be integrated by the software development companies into their software development processes so as to ensure that each and every build can actually be “certified” aka quality assured. This notion of quality is, in a way, an echo of Philip Crosby’s definition of quality (P B Crosby, Quality is Free: The Art of Making Quality Certain) as “conformance to requirements”. Such requirements include elements like the code quality, flexibility, maintainability, portability, re-usability, readability, scalability, reliability.

Thus, the approach to Software Testing in the light of Software Certification has a few concern areas that needs to be looked into:

* creating of tools to test various kinds of software (and not only web applications)

* creating of tools that would provide guidance to software developers in terms of compliance with known standards

* collaborating across a broad spectrum of software technologists towards ABI stability across operating system releases

the price of failing on any or all of the above would result in “software application hell” on operating systems whereby the effectiveness or an efficiency of the product can be compromised. A small component of the way forward is to look at it from the viewpoint of an independent software developer. A larger component of the roadmap is to ensure that there are measurable quantities which can be used by software developers to put metrics to quality and hence to cost during the development phase. Software quality might be quantified through software error data. The factors (as suggested by Kenneth S Mendis, Quantifying Software Quality) that allow putting metrics to software quality could be:

* error by category

* error density

* error by type

* error by severity

* error arrival rate

* error reporting

The generally accepted principles of software testing are:

* test process, test cases and test plan

* techniques, methodologies, tools and standards

* the culture of the organization

The above principles should enable a software developer (or an ISV) to be looking into the feasibility of “software certification” and increase in software quality. What would be good to have at this stage is an attempt at formalization of the technology of certification which would allow the development of methodologies around it. The Certification Service would then be meaningful to have in terms of providing an index of stability and reliability in the software industry aka ecosystem.

gphoto2/gthumb annoyance

Ran into An error occurred in the io-library (‘Could not claim the USB device’): Could not claim interface 0 (Operation not permitted). Make sure no other program or kernel module (such as sdc2xx, stv680, spca50x) is using the device and you have read/write access to the device using gphoto2 on Werewolf. A search landed up a set of posts here. Any tips would be appreciated at sankarshan dot mukhopadhyay at gmail dot com. Uploaded some debug output. Turns out that there is a bug on this. Thanks Basil Mohammad Gohar for pointing out the FedoraForum thread

Deja vu or Vuja de

I was reading this post from Richard and ended up being bemused at whether the world is really flat. In spite of knowing the folks who do Bengali and Bengali (India) localization personally, this got pushed as a request upstream without a slight poke as to “what do you folks think ?” In a way I was anticipating this for a while – a big thanks to the Pidgin team.

There’s a Localization Panel Meet being planned, I’ve earlier blogged about my expectations.

foss.in sweetness

This dude is just amazing – he keeps outclassing himself over and over again. Grab these posters and ogle at them and while you are at it, be assured that the quickest way to figure on them in the coming years is to “start contributing” and to do that register now.

On a small side note, it is sweetness to see my company sponsor a community event – something that was promised here.